Reproductive HealthHealth EquitySocial Justice Insider. AI Strategist.

25 years inside the reproductive health movement. AI strategy built for it. I help mission-driven organizations use AI that protects patient access, fights misinformation, and doesn't compromise the communities they exist to serve.

Most AI

Wasn't Built

For This Work.

Your staff is already using AI. Your board is already asking about it. And the tools being built by the tech industry weren't designed with your patients, your politics, or your risk profile in mind.

The question isn't whether AI is coming to SRH. It's whether you'll be led through it by someone who actually knows what's at stake — or left to figure it out alone.

- → AI is already in your org — and leadership doesn't know. Staff are using it in patient messages, grant narratives, and clinical summaries right now. That's not a future risk. It's a present liability with no policy, no oversight, and no paper trail.

- → Every vendor claims to be ethical. None of them know your context. HIPAA-compliant doesn't mean safe in a post-Dobbs, high-surveillance environment. Without a framework built for SRH specifically, you're evaluating tools blind.

- → You're being asked to lead on AI with zero support. Your board is asking. Your funders are asking. Your staff is asking. And you're googling "AI policy for nonprofits" at 11pm trying to figure out where to even start.

- → The equity and ethics questions are real — and they don't have easy answers. Who does this technology center? Who does it harm? What happens when an algorithm makes decisions about communities that have already been failed by systems? These aren't abstract concerns. They're the work.

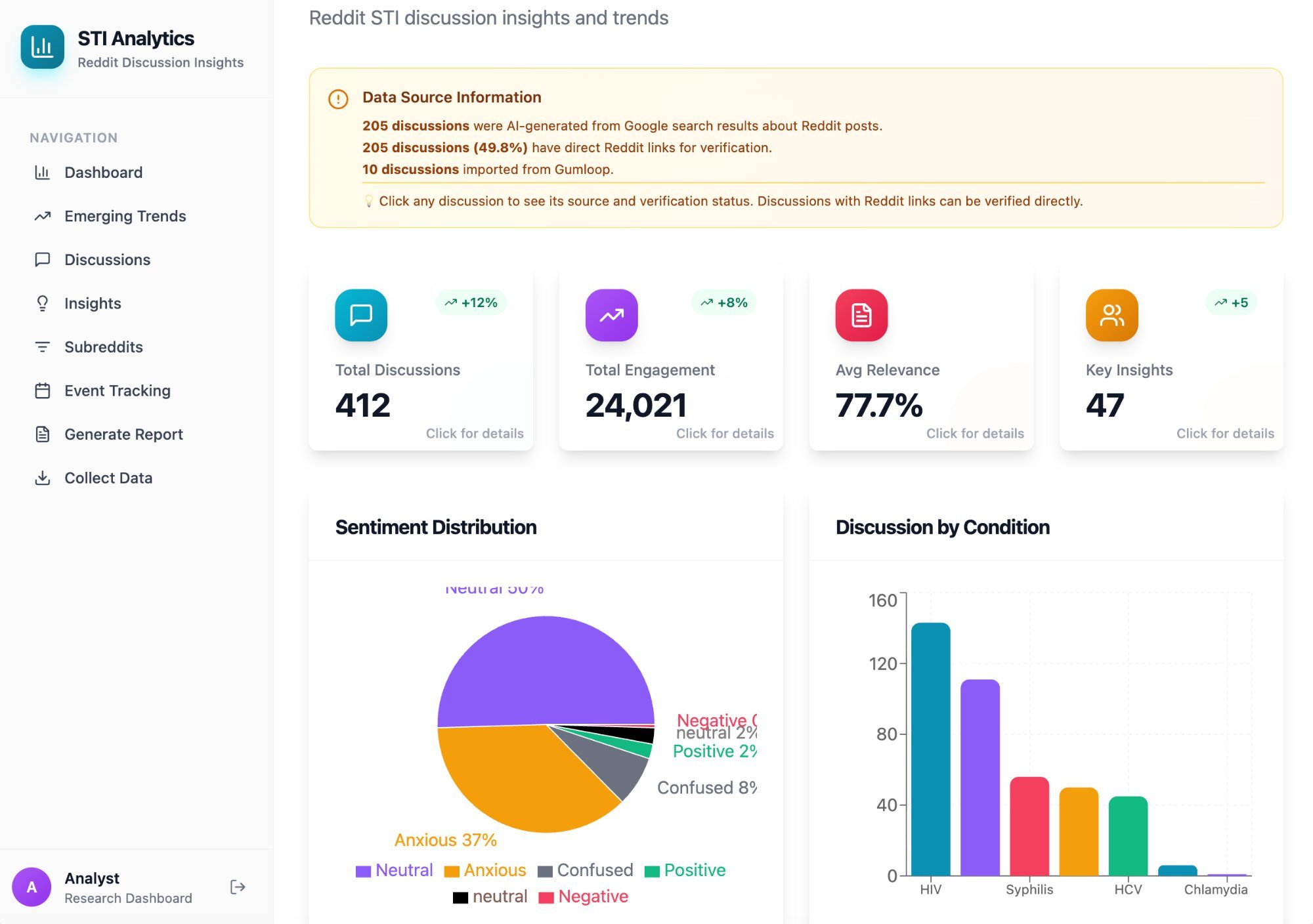

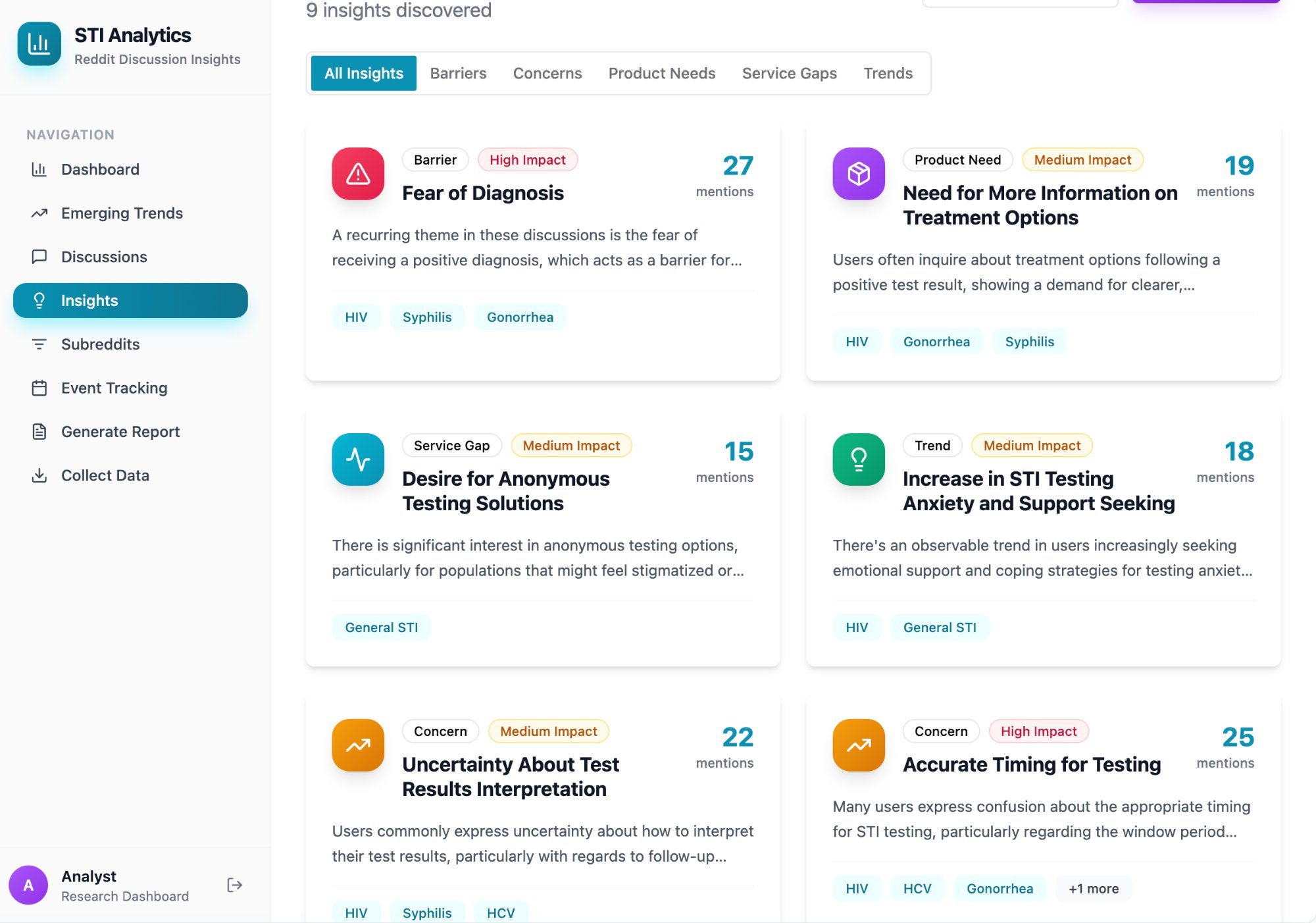

SRH Misinformation Intelligence Pipeline.

An AI-powered misinformation detection system built for sexual and reproductive health organizations. It scrapes TikTok and YouTube for SRH content, transcribes video audio, scores claims against medical sources, clusters trends semantically, and delivers provider-ready intelligence digests — with talking points and viral post links — every 1–2 weeks.

Built for Power to Decide in partnership with a national provider-facing SRH platform. Hybrid human-AI validation at every stage.

Built for

This Work.

AI Audit & Readiness Assessment

Before you adopt anything new, understand what's already in your org. I map where AI is showing up — officially and unofficially — and what risks it carries in your specific context.

AI Strategy Consulting

One-on-one strategic advising to build a values-aligned AI roadmap. I identify the right use cases, flag the real risks, and help you move forward with clarity and confidence.

Workshops & Team Training

Custom training for SRH teams navigating AI — from "what is this?" to "how do we govern it?" I meet your team where they are and build real capability.

SRH Tool Design & Technical Partnership

I don't build the tools — I make sure they get built right. I sit alongside your technical teams as the SRH expert, translating movement knowledge into product requirements and keeping equity at the center.

AI Governance & Ethical Policy

If you're already using AI tools, I review them for bias, privacy risk, and ethical alignment — then help you build internal policies that hold your technology accountable.

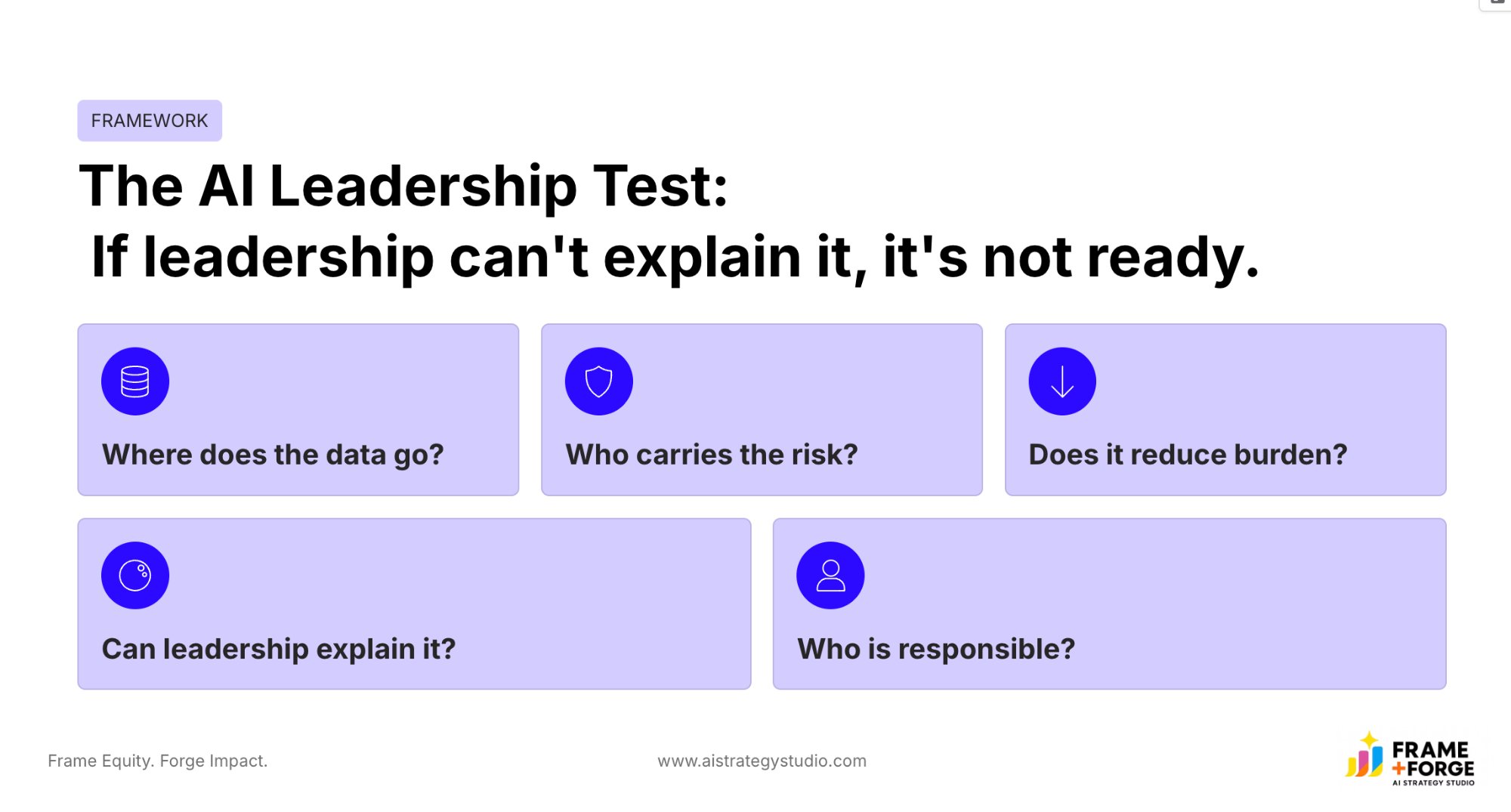

Executive & Board Advising

Strategic advising for EDs, senior leaders, and board members — building the confidence and frameworks to lead AI conversations with authority — with the board, with funders, and across the organization.

Speaking & Keynotes

Conferences, convenings, board retreats, funder briefings. I bring 25 years of SRH experience and hands-on AI implementation to every stage — a combination you won't find elsewhere.

Ready to Talk?

Every engagement starts with a free 30-minute call. No pitch deck. No pressure. Just a direct conversation about where you are and what's actually possible.

From the People

Who've Seen the Work.

The Body Is

The Interface.

A justice-driven exploration of how artificial intelligence is reshaping sexual and reproductive health — and what it means to design digital systems that center care, autonomy, and equity from the start.